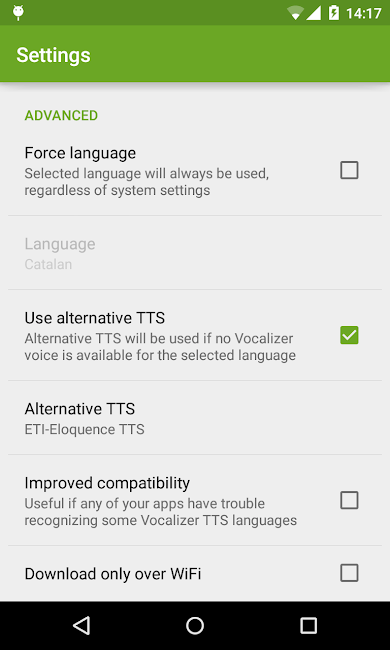

The physical properties of each device, as well as the environment they are placed in, influence the range of frequencies and level of detail they produce (e.g., bass, treble and volume). This allows developers to specify what device - headphones, smart speakers, or traditional telephony - the TTS is intended for so that Google can optimize the output.įrom headphones to speakers to phone lines, audio files can sound quite different on different playback media and mechanisms. Meanwhile, given the wide use cases, Cloud TTS is launching Audio Profiles in beta. In total, Cloud Text-to-Speech now supports 30 standards voices and 26 WaveNet variants in 14 languages. No longer limited to US English, there are 17 new WaveNet voices, thus allowing developers to build apps for more languages.

After Google opened up Cloud TTS to developers in March with a public beta, it is now generally available. Meanwhile, Cloud Speech-to-Text is also gaining new beta features.ĭeveloped by DeepMind, WaveNet allows for high-fidelity voices in Cloud Text-to-Speech that sound more natural. Google’s solution for third-party developers is now generally available with new languages and features. Given the rise of smart speakers and other devices that talk back to you, text-to-speech (TTS) is an important technology.

.png)

RSS Feed

RSS Feed